Last year when I got burned out on programming, I took five months off to try to learn how to draw. I had learned twice before, but for various reasons I associated those times with painful stuff, so I had a mental block on trying to go back and learn.

Two months after I decided to take the rest of the year off, I found out about Inktober. It is a drawing challenge that was created in 2009 that artists participate in where they try to practice their pen and ink drawing skills by doing a piece every day during the month of October. There is a prompt list and people post their drawings to social media.

I wasn’t going to post things to social media, but it looked like fun. I picked up some supplies and was getting geared up to participate in 2020. Then bad stuff happened, because of course.

First, the creator of Inktober was accused of plagiarizing a book written by a black artist on how to do pen and ink drawings. The Inktober guy’s book was pulled from production and no one has seen it, so not 100% sure it was, but it looked pretty damning based on available evidence. So that dampened everyone’s enthusiasm for the event because no one wanted to support a white dude stealing intellectual property from a black artist.

Second thing that happened was that my husband and I had a miscommunication and he asked me to paint parts of the house before winter. Winter is difficult and our house had the HGTV aesthetic of lots of tasteful neutrals, which fuck that. So a week into the challenge I had to go to the paint store and put tape everywhere and try to paint. I had a meltdown and only did about a third of the painting before I couldn’t deal anymore. So I never got back to things after this, which was a bummer for me.

So I have been looking forward to doing this for the past year. I have spent the past few months trying to figure out my supplies and what I want to draw.

First I had to figure out a sketch book. I knew from last year that it was really difficult to find the right kind of paper to use with a dip pen. Technically, ink is a fluid medium, so you need some kind of paper designed for wet media, such as mixed media or watercolor paper. I used mixed medial paper last year and had a terrible time because the rough texture of the paper meant that fibers kept clogging the nib of the dip pen.

Ideally I wanted either a hot pressed watercolor paper (which is smooth) or a Bristol board, which is what comic artists use for their finished pieces. Finding either of these as a sketch book was incredibly challenging.

Artists tend to dislike hot pressed water color paper because the watercolor paint spreads quite a lot and it doesn’t have the distinctive watercolor feel to it. With the Bristol board, people tend to take sheets of it to render a page of comic sketches rather than have a whole sketchbook of the stuff.

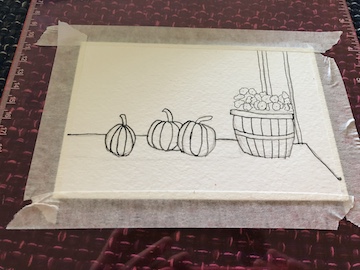

I had resigned myself to just using a watercolor sketchbook and hoping for the best when I found my perfect solution. Viviva, a company in India that makes sheets of watercolor paint, was this year’s sponsor for Inktober and offered a bundle of supplies for the event. One of those supplies was an actual hot press watercolor paper sketchbook! The company founder did a video about the products and said they specifically created this product because over the years, other inkers have had the same complaints I have and there was now an actual market for such a thing. Score!

Next, I will go over pens.

Okay, I will be honest about something. One of the reasons I got interested in drawing is that I love pens. I love art supplies in general, but I have always loved pens. I was the weird kid in high school with a $50 fountain pen with converters and ink bottles. I am just old enough that we didn’t have computers in high school for note taking and when that became a thing when I was in college I found the lack of being able to physically write and sketch out my thoughts to be quite wrenching.

I am on the autism spectrum and I have sensory issues and sensitivities. I love the feel of a pen moving across the surface of paper and doing this soothes me when I am overwhelmed. I usually bring cross stitch with me to conferences for this purpose but I would really like to be able to draw as it would be less obtrusive and easier to transport.

So choosing the right delivery medium for my ink was very important to me. Last year I got rather confused because I didn’t know how to fill in large dark areas with just hatching. I didn’t realize people would use Sharpies or brushes to fill in those areas and use hatching for the shaded parts. I primarily used Micron fine liner pens last year. That was okay, but I think I have a better solution for this year.

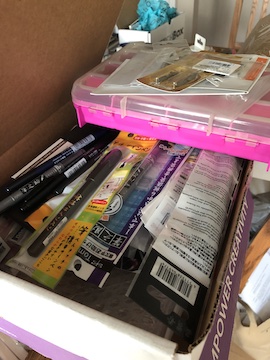

I will go over these in no particular order.

All of the pens that have Japanese characters on them are some form of brush pen. Some have actual bristles while others have a flexible nib that allows you to get different line weights and variations. I’m trying to learn Japanese through DuoLingo and their alphabet has a lot more line variation than ours does, so their pens tend to be a lot more expressive. Also, the Japanese are very stringent about penmanship and craftsmanship, so if you’re looking for something that you want to be well made and delightful to use, you usually can’t go wrong with something made in Japan.

I also have several dip pens. I went out and bought the actual nibs that manga artists use for their work. There are three sizes that all have different characteristics. The more common one to find is the G nib, which tends to be used for more bold and dynamic manga. I also have a maru nib on the brown nib holder because it was tiny and got stuck on the holder and if I want to remove it I will wind up destroying it with pliers, so it stays until I break it.

Finally, I have a Winsor and Newton Kolinsky Series 7 brush. I kept reading online that artists use it for inking, but I am afraid to actually use it because I know that the ink will stain it, which I know is illogical because that is literally the only reason I bought it! I might use one of my less expensive and crappier brushes until I get the courage to break out the W&N brush.

Last, but not least, we have the ink in Inktober.

Last year I wasn’t really sure what I was doing, so I bought a bunch of brightly colored inks in various forms. I thought it would be like when I was in high school and I could use pink ink in my fountain pen for writing, but that is really not the case. Most of the colored inks I bought are for painting or doing ink washes on my finished drawings.

So this year trying to keep it simple and use some waterproof black inks.

I got a Sennelier black India ink in this month’s SketchBox. I am excited to try that. Last year I got carried away and realized you could buy India ink by the pint and I got a pint of Speedball Super Black ink for $13. I also have over the years accumulated a collection of various Sumi black inks that are used in manga. I haven’t used them yet, so I would like to try a few things to see what I like using best.

Since there has been quite a bit of ugliness around the official Inktober stuff, I am not planning to follow the prompts. Instead, I am planning to draw various scenes from the TV show Twin Peaks. Since David Lynch comes from a fine art background, his films and shows tend to be more like surreal art pieces than story driven shows. There are a lot of iconic shots from the show and I think it would be good practice to try and render those.

I am really excited to have a full month to play around with these supplies. I am fascinated by comic art but it is also something that is somewhat unapproachable because I don’t know how one goes about actually producing it. I am excited to give this a shot. I might also be way too influences by Monthly Girls Nozaki-Kun…