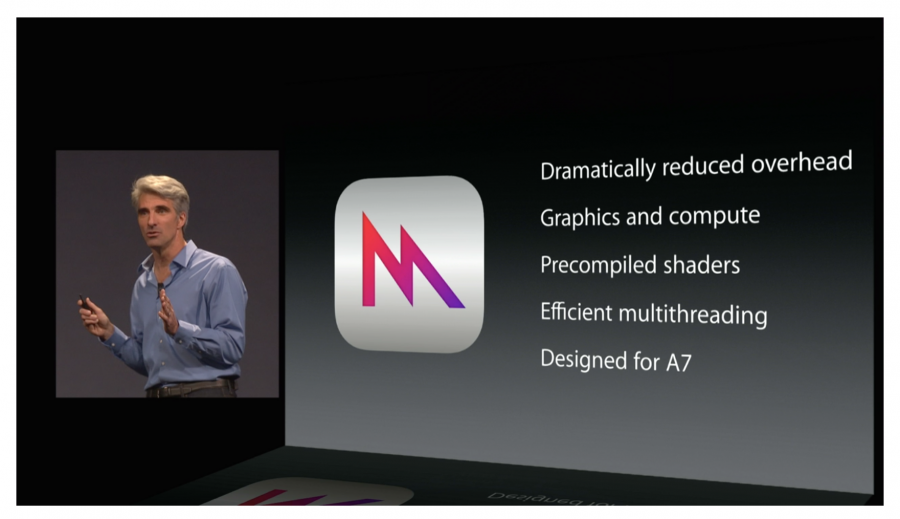

Every year it happens. WWDC comes and unleashes a bunch of new stuff for all of us to learn. For most people the last few years, WWDC hasn’t been that exciting because it’s primarily been graphics and augmented reality stuff. For me, it’s been Christmas. I love Metal and ARKit and all the new shiny graphics stuff that gets released each year.

However, inevitably I will encounter some aspect of my workflow that doesn’t involve low level graphics. I will have to connect to a network backend. I will have to figure out the new user interface framework.

I want to get back to my fun graphics stuff as quickly as possible, so I Google tutorials and sample code for these things I don’t want to think too much about. The results come up. Perfect! I found exactly what I want!

I click the link and my heart sinks. Instead of a nicely written blog post or article about the technology, I stare into the void of a 5+ hour video course someone put together.

Videos Are Terrible

The last few decades have show the emergence of this idea of different learning styles. Some people are “visual learners,” whatever that means. Some people learn things by doing, which to be honest is basically how everyone learns?

This has unleashed this unholy deluge of instructional videos for the poor, underserved portion of the population that learns by watching other people talk. These are probably the same people who learn by listening to the same three white dudes in tech talk about cars on podcasts.

I’m sure there is some portion of the population that learns by watching videos. And videos have their place. I love watching short cooking videos. Cooking is a physical thing and watching how someone folds a dumpling or condenses a 3 hour baking project into a three minute video that cuts out all the prep work and waiting can be quite instructive. None of that applies to programming.

Programming isn’t suited to a video medium

I know that someone who won’t read this blog post is going to “Well, actually…” me on how video is great for programming because it shows you how to use the IDE/game engine/etc… I am not talking about videos on how to use storyboards or set up an animation loop in Unity. I am talking about code. Words you type into a text editor or IDE with no software interface.

Programming is inherently word based. I have used the analogy that programming is what you would get if Math and English had a baby. It’s got language and syntax and grammar. All of which gets lost in translation when you have someone trying to verbally read it out loud.

Every bad tech talk I have attended at conferences goes the same way. The presenter spends a few minutes introducing the topic of their talk and gets everyone excited about using the framework. Then they pull out all the slides covered with code and it all goes downhill from there. People’s eyes glaze over. They start chatting on Slack. They surf Facebook. Everyone waits for it to be over, but hey, beats being at work I guess?

Code wasn’t meant to be read out loud. It’s the most efficient compromise you make with the computer to be able to communicate. You can read through code and bounce around asynchronously to see how everything works in a way you can’t if someone is just talking.

If you have a nicely written tutorial and you didn’t quite get something, you can scroll back to see where you got lost and review what you don’t understand.

Which leads into the next point.

You can’t search for what you need

It’s incredibly frustrating to spend hours looking for something that covers the exact topic you need, only to find out that the presenter isn’t going to cover the part that you need. When you have a video, you have no idea what the specifics are that the presenter will actually cover. I went to an Accelerate talk where the presenter said that he would not cover any of the math that the framework utilizes, which is basically the entire point of the framework!

If I am dealing with a book or documentation, I can utilize a search function to see if the document I am looking at contains what I need. I can zero in directly on the part that I need to understand and be on my way in a few minutes. If what I want isn’t there, I haven’t really invested anything into it.

If you’re dealing with a video, it’s a crap shoot as to whether the author is going to speak about the one specific thing you need to know at any point in their long rambling video. Sometimes they are helpful and will give chapter titles so you can guess if they’re going to talk about the thing you need to do. But usually the author has created an elaborate project that is dependent upon you watching everything up until that point for anything to make any sense.

You can’t do anything else

I learned to code by working through the Big Nerd Ranch iOS book multiple times. I didn’t quite understand the concepts the first time through, so I would do them again and again. By the time I got to the fourth or fifth time, my brain had been introduced to the concepts enough that it began to understand how everything works together.

To keep myself from going insane, I would throw on episodes of Star Trek: The Next Generation. I worked through the entire series by having an episode on while I coded through a tutorial. This worked for me.

If I have an instructional video on, I literally can’t do anything else. I’m held hostage. You can’t read or check email because you miss something and have to rewind to figure out what someone said. If you’re coding along, you have to keep pausing and rewinding to catch what the person said. You endure long bits of watching someone writing the same code as you in real time. This is miserable and tedious. Not to mention mentally exhausting in a way that reading is not.

I do have some Unity videos I will throw on in the background, but I do this knowing that I am not engaged enough with the material to actually learn it. I can’t do this for something I must use for an active project.

If I am working with written materials, I can take mental breaks for a few minutes and come back. I can copy/paste code if I don’t quite get it written correctly. I can reread things a few times if I didn’t quite get it the first time. I can have on music or do literally anything else to make it easier to stand at my computer for hours force feeding myself technical information.

Back when I used to work for people, I would be asked to figure out something I didn’t already know. Rather than flailing blindly around I would look for something written that I could look through to learn the things I need to get working immediately.

It’s a lot easier to sell your employer on the idea that you’re actually working if you’re reading something and have a code editor open. If you’re watching a video, it looks like you’re slacking off. It also means that if someone interrupts you, it’s way more disruptive than if you are reading something. In the age of surveillance capitalism, it looks bad if you try to learn something by watching a video. This is not the case if you are reading something. Perception matters.

The podcast problem

I don’t listen to podcasts, which is ironic because I have hosted three of them. Podcasts tend to be two or more people rambling about some topic and making in jokes that only they find funny where nothing is edited out. Sometimes you get someone who knows enough audio production that they will fix levels and remove long awkward gaps in conversation, but rarely do you get a well focused discussion about a topic with an interviewer who not only knows what questions to ask but who will keep the conversation on track.

A lot of instructional videos are people just kind of turning on a screen capture app who then begin to work on a demo project they feel like working on. There isn’t really an agenda for them to follow. They don’t think about how best to present information. They leave in their coding errors.

It’s this idea that it’s a lot easier to just record yourself talking than it is to have a well thought out plan as to how you are going to take a complicated topic and explain it to someone who doesn’t know everything you do.

Final Thoughts and Complaints

I really do not understand why this video explosion has happened. I don’t know if it’s similar to that Facebook pivot to video ads where they lied to people about the impact of video and everyone dove in wallet first and got screwed over.

It may just be easier to half ass a video than it is to write a good tutorial. There was that ghastly Hacker News bit saying that a group of coders could start up a news room in a month which was totally bullshit. Writing is a skill. A lot of people are not good at explaining things. Everyone complains about the quality of Apple’s documentation coverage, but think about how hard it is to scale up a team of people who are not only technically savvy enough to understand all the code but can also write clearly. There are a limited number of people out there who can do that.

That isn’t to say you can’t improve. The first few things you write might be terrible, but if you know they’re terrible and it bothers you, you can work to improve it.

I dunno. I might just be a crusty old person who is stuck in my ways yelling at the kids to get off my lawn. But after they do I am going back to my she shed with my programming books and my 600 queued episodes of Chopped.